Parquet Column Cannot Be Converted In File - If you have decimal type columns in your source data, you should disable the vectorized parquet reader. I encountered the following error, “parquet column cannot be converted in file, pyspark expected string found: Learn how to fix the error when reading decimal data in parquet format and writing to a delta table. Int32.” i tried to convert the. You can try to check the data format of the id column. The solution is to disable the. Spark will use native data types in parquet(whatever original data type was there in.parquet files) during runtime. When trying to update or display the dataframe, one of the parquet files is having some issue, parquet column cannot be converted.

You can try to check the data format of the id column. Int32.” i tried to convert the. If you have decimal type columns in your source data, you should disable the vectorized parquet reader. The solution is to disable the. Spark will use native data types in parquet(whatever original data type was there in.parquet files) during runtime. Learn how to fix the error when reading decimal data in parquet format and writing to a delta table. I encountered the following error, “parquet column cannot be converted in file, pyspark expected string found: When trying to update or display the dataframe, one of the parquet files is having some issue, parquet column cannot be converted.

Int32.” i tried to convert the. I encountered the following error, “parquet column cannot be converted in file, pyspark expected string found: You can try to check the data format of the id column. Learn how to fix the error when reading decimal data in parquet format and writing to a delta table. If you have decimal type columns in your source data, you should disable the vectorized parquet reader. Spark will use native data types in parquet(whatever original data type was there in.parquet files) during runtime. When trying to update or display the dataframe, one of the parquet files is having some issue, parquet column cannot be converted. The solution is to disable the.

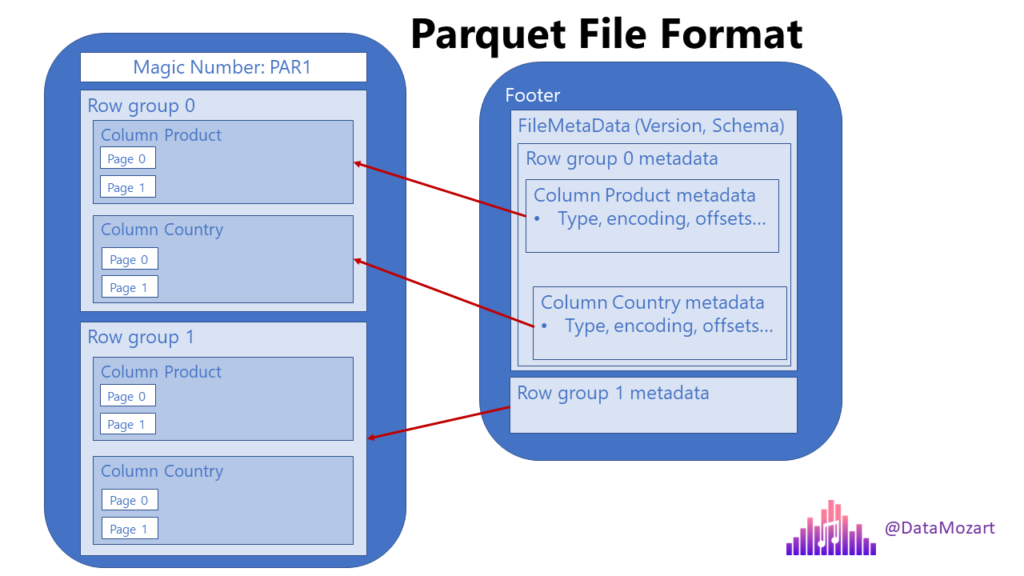

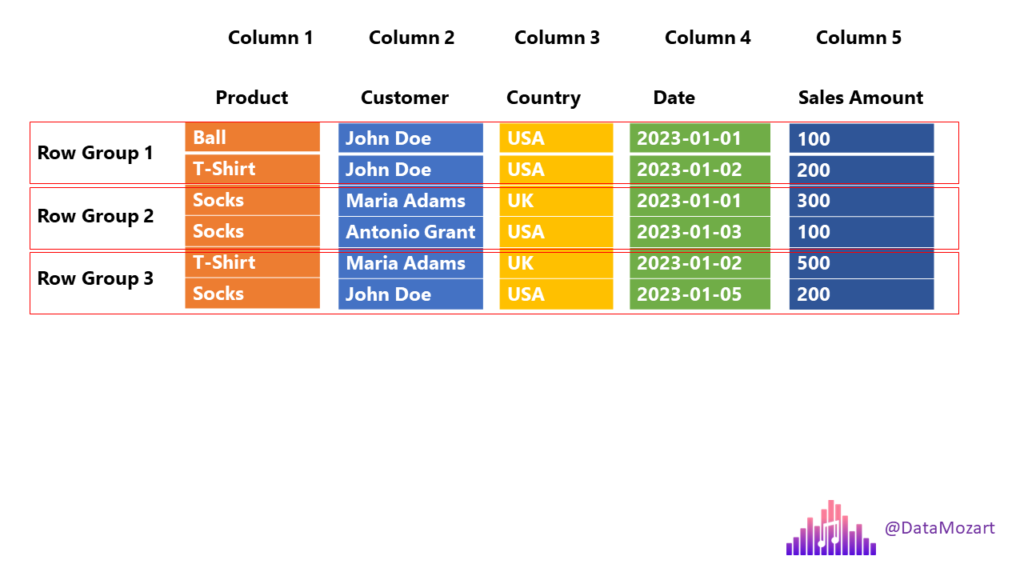

Parquet file format everything you need to know! Data Mozart

The solution is to disable the. Spark will use native data types in parquet(whatever original data type was there in.parquet files) during runtime. If you have decimal type columns in your source data, you should disable the vectorized parquet reader. When trying to update or display the dataframe, one of the parquet files is having some issue, parquet column cannot.

Why is Parquet format so popular? by Mori Medium

Int32.” i tried to convert the. Spark will use native data types in parquet(whatever original data type was there in.parquet files) during runtime. Learn how to fix the error when reading decimal data in parquet format and writing to a delta table. You can try to check the data format of the id column. When trying to update or display.

Demystifying the use of the Parquet file format for time series SenX

Spark will use native data types in parquet(whatever original data type was there in.parquet files) during runtime. Learn how to fix the error when reading decimal data in parquet format and writing to a delta table. I encountered the following error, “parquet column cannot be converted in file, pyspark expected string found: Int32.” i tried to convert the. When trying.

Parquet file format everything you need to know! Data Mozart

Int32.” i tried to convert the. I encountered the following error, “parquet column cannot be converted in file, pyspark expected string found: You can try to check the data format of the id column. If you have decimal type columns in your source data, you should disable the vectorized parquet reader. When trying to update or display the dataframe, one.

Parquet file format everything you need to know! Data Mozart

Int32.” i tried to convert the. When trying to update or display the dataframe, one of the parquet files is having some issue, parquet column cannot be converted. Spark will use native data types in parquet(whatever original data type was there in.parquet files) during runtime. Learn how to fix the error when reading decimal data in parquet format and writing.

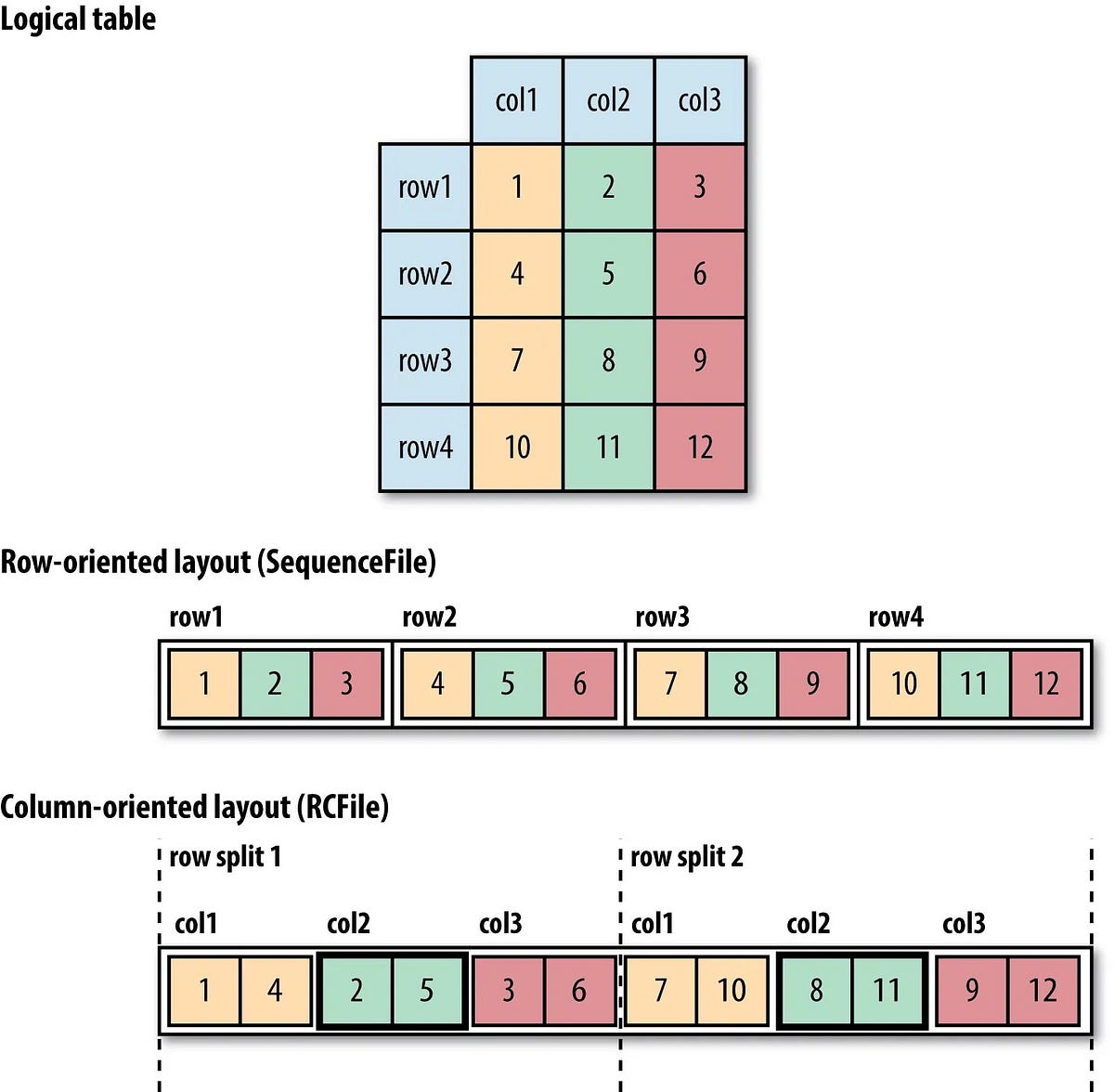

Big data file formats AVRO Parquet Optimized Row Columnar (ORC

I encountered the following error, “parquet column cannot be converted in file, pyspark expected string found: If you have decimal type columns in your source data, you should disable the vectorized parquet reader. Spark will use native data types in parquet(whatever original data type was there in.parquet files) during runtime. Learn how to fix the error when reading decimal data.

Spatial Parquet A Column File Format for Geospatial Data Lakes

Learn how to fix the error when reading decimal data in parquet format and writing to a delta table. If you have decimal type columns in your source data, you should disable the vectorized parquet reader. You can try to check the data format of the id column. I encountered the following error, “parquet column cannot be converted in file,.

Understanding Apache Parquet Efficient Columnar Data Format

When trying to update or display the dataframe, one of the parquet files is having some issue, parquet column cannot be converted. You can try to check the data format of the id column. Spark will use native data types in parquet(whatever original data type was there in.parquet files) during runtime. If you have decimal type columns in your source.

Parquet はファイルでカラムの型を持っているため、Glue カタログだけ変更しても型を変えることはできない ablog

Learn how to fix the error when reading decimal data in parquet format and writing to a delta table. Spark will use native data types in parquet(whatever original data type was there in.parquet files) during runtime. Int32.” i tried to convert the. I encountered the following error, “parquet column cannot be converted in file, pyspark expected string found: If you.

Parquet Software Review (Features, Pros, and Cons)

Learn how to fix the error when reading decimal data in parquet format and writing to a delta table. Int32.” i tried to convert the. If you have decimal type columns in your source data, you should disable the vectorized parquet reader. The solution is to disable the. When trying to update or display the dataframe, one of the parquet.

When Trying To Update Or Display The Dataframe, One Of The Parquet Files Is Having Some Issue, Parquet Column Cannot Be Converted.

Learn how to fix the error when reading decimal data in parquet format and writing to a delta table. I encountered the following error, “parquet column cannot be converted in file, pyspark expected string found: You can try to check the data format of the id column. The solution is to disable the.

Spark Will Use Native Data Types In Parquet(Whatever Original Data Type Was There In.parquet Files) During Runtime.

Int32.” i tried to convert the. If you have decimal type columns in your source data, you should disable the vectorized parquet reader.